Humanize the algorithm: Building an emotional architecture into conversational experiences.

Note: I started developing this framework around 2016 and wrote this article in 2019. While a lot has changed—in the world and my own knowledge—I feel concept still holds true. Today, we are already seeing AI with EQ, I feel the question is how will business inject the essence of their brand into AI? So, time travel back to the mid 20-teens and enjoy.

Conventional point-and-click and swipe-and-tap interaction patterns have evolved to include the more natural speak-and-receive interactions found in voice and conversational experiences. This is not a slow transition. Voice is the fastest growing technology. In recent years, sales have grown over 82% from 114MM in 2018 to 208MM in 2019, well surpassing wearables, AR/VR and even smart phones and tablets. By 2020, 138MM US households (75%) will have a smart speaker and 30% of web browsing sessions will be done without a screen.

Conversations drive a deeper emotional engagement and the cognitive load is lighter because speaking is more natural than text-based interactions — we can say 150 words per minute, three times faster than we type — which heightens our expectations for voice experiences to make it much easier to complete tasks. We expect these experiences to mimic real conversations, to follow the tone and emotion of a natural conversation and as a result our patience is much shorter.

Today, we build unrealistic, black and white versions of people engaging with our experiences based on a data profile built from a binary history of clicks, likes, views and rules based personalization. We then expect conversational experiences driven by an algorithm reading this profile to sound and act human.

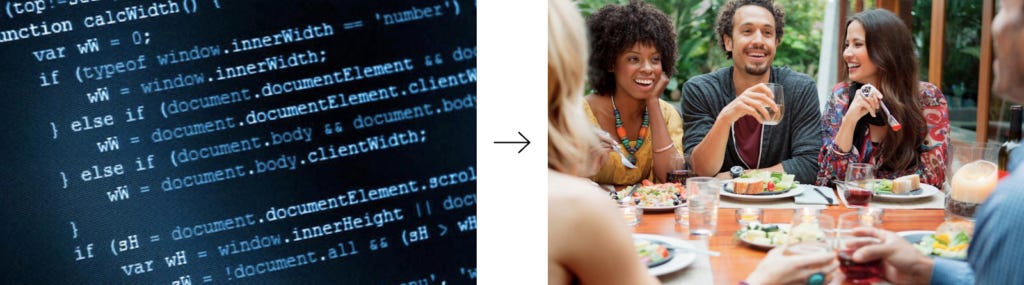

We expect binary code to be and act like this.

Contrast that with real life which is messy, layered in shades of grey with endless interpretation, emotion and changing perceptions of the world around us from moment to moment and it’s not surprising a bot or AI acts and sounds binary, cold and inhuman.

In reality, binary code makes us be and act more like this.

The frustration isn’t because of bad code or malicious intent of the creators. It’s created from unrealistic expectations of how “perfect” AI is supposed to be. Unfortunately, this erodes trust and slows adoption because people perceive AI technologies as a novelty more than something helpful in their lives.

It’s not surprising then that it’s this binary = binary equation that leads AI and bots to fail, not because they are programmed poorly, or that they interpret data the wrong way, it’s because they don’t have the emotional intelligence needed to grasp nuance. As Oren Jacob, founder of chat tech company Pullstring and 20 year Pixar veteran argues, artificial intelligence isn’t just about anticipating needs and providing data-driven recommendations, it’s focusing on “carefully written conversations, filled with characters built from dialogs, that unfolds just like a Hollywood script.”

To meet these expectations, conversational experiences need to reflect an emotional intelligence that is true to the character and personality of the brand it represents and can engage with people through a much deeper range of emotions. This is where experience designers can and must play a critical role. It’s our responsibility to know what influences the decisions people make; to know how to create more natural conversations; and know how to deliver personal and contextual experiences. Layering these humanistic qualities allows conversational experiences to move beyond the playback of canned responses to build in personality to the responses of the AI, and the AI’s ability to learn and respond to our personality, will drive trust, adoption and value.

To do that, conversational interfaces — visible and not — we engage AI and bots through will need the ability to utilize, understand and respond to the full spectrum of emotions — joy, trust, fear, surprise, sadness, disgust, anger, and anticipation—as illustrated by Robert Plutchik’s wheel of emotions.

Sherine Kazim, former Managing Director, Experience Design at Huge and now CEO of Wunderman Thompson, describes how this wheel can be utilized for digital experiences perfectly. “You can see in the chart that there is a dissipation of intensity that happens as you move further out on the wheel, and in design, nobody is talking about that yet. We currently design things that make people feel basic emotions–maybe joy or sadness–but we don’t talk about how we should adjust the [experience] according to how the user’s emotions may be intensifying or dissipating.”

Emotional Architecture layers in the needed nuance that transforms a cold text message into a personal engagement. Emotional Architecture does not replace humans or try to be a human. At it’s best, it augments the digital experience and strives to make it more adaptable, relatable and natural.

Adaptable:

Empathy—understanding the experience of another—is critical to a more meaningful engagement and allows responses to follow the fluidity of the conversation based on the context, language and tone of the person.

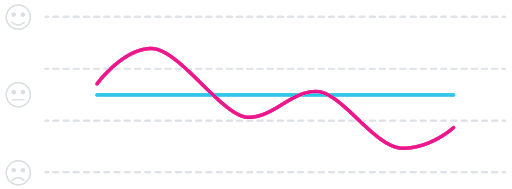

At the start, we’re still dealing with more canned responses and an inability to follow the emotional arch of the person throughout the conversation. The aim is to Identify the gaps between the users’ behavior and the response of the AI to establish a baseline and find opportunities to improve the experience.

Natural:

Building in empathy will help create an approachable, human language to drive consistency at all touchpoints.

Familiarity creates a perception of ease to complete a task regardless of the reality. (Think TurboTax – they didn’t make the tax code simpler, they just made it easier to complete and file taxes therefore making taxes themselves seem easier).

Relatable:

Deliver personal conversations that anticipate needs and increase satisfaction.

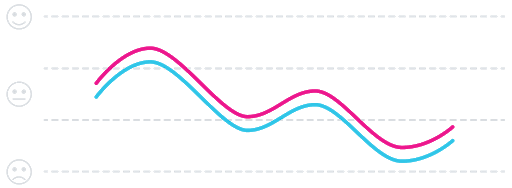

Sympathy (a reciprocal relationship between two people) creates a mutual value exchange based on the story arc and learnings of multiple engagements. (Do “nudges”, choice architecture, and influence live here? Does this go hand-in-hand with anticipatory?)

To inject a layer of emotion into an AI experience requires a relentless pursuit of simplicity. It’s a deliberate decision to focus on designing moments that digitize the human, face-to-face experience. When done right, simplicity hides the complexity of the business — jargon, processes, organization — from the user and doesn’t make the business needs the users problems.